Tracking computational results with the cacher packgage ( Peng, 2008) can be useful for efficient storage and reproducibility, and the rctrack package ( Liu and Pounds, 2014) tracks computations including random seeds in a read-only format without modifications of existing R code. The knitr ( Xie, 2015) and rmarkdown ( Allaire et al., 2017 Baumer et al., 2014) packages are widely used and enable literate programing by weaving R code and results into the same document in many formats. Some of these are listed within the CRAN Task View: Reproducible Research ( Zeileis, 2005). Currently, many R packages facilitate reproducible analyses with literate programing or by tracking computational results. Their auditing system would not only extract the computing steps required to produce the analysis, but also would identify the patterns of the creation and use of particular objects within the directed acyclic graph of computations. Both open-source and commercial tools could encounter a number of barriers such as 1) the tool is focused on one dimension of the reproducibility problem rather than offering a complete system, 2) the tool may be hard to identify, 3) they may have subtle differences in capabilities, making it hard for users to select among them, 4) documentation or support are in short supply, and 5) they require degrees of sophistication that beginners lack.īefore the introduction of R, Becker and Chambers (1988) proposed a framework for auditing data analyses that could replicate and verify calculations called the AUDIT S-plus ( Becker et al., 1988) system. While there is commercial software such as SAS (SAS, Cary, NC) and Stata (StataCrop, College Station, TX, USA) and open-source tools that have systems for reproducible computations, these may have significant challenges. Also, interest in data science has expanded the number of researchers and students who are undertaking data analyses, which underlies the need for tools manageable for a broad range of user expertise. There have been significant strides in improving the set of available tools for constructing reproducible analyses such as the concept and implementation of literate computing, version control systems, and mandates by journals and sponsors that create standards for submitting data, code, and systems for reproducibility. Furthermore, transparency and reproducibility are deemed necessary by the American Statistical Association’s Ethical Guidelines for Statistical Practice ( American Statistical Association, 2016). While some of the impetus for the interest in reproducibility has come from high-profile incidents that reached the sphere of the general public ( Carroll, 2017), there is a consensus that reproducibility is fundamental to scientific communication and to the acceleration of the scientific process ( Wilkinson et al., 2016). Reproducibility, accountability and transparency are increasingly accepted as core principles of science and statistics. Reproducing collaborative work may be highly complex, requiring repeating computations on multiple systems from multiple authors however, determining the provenance of each unit is simpler, requiring only a search using file hashes and version control systems. However, accountable units use file hashes and do not involve watermarking or public repositories like VCRs. Both accountable units and VCRs are version controlled, sharable, and can be incorporated into a collaborative project. An accountable unit is a data file (statistic, table or graphic) that can be associated with a provenance, meaning how it was created, when it was created and who created it, and this is similar to the ‘verifiable computational results’ (VCR) concept proposed by Gavish and Donoho.

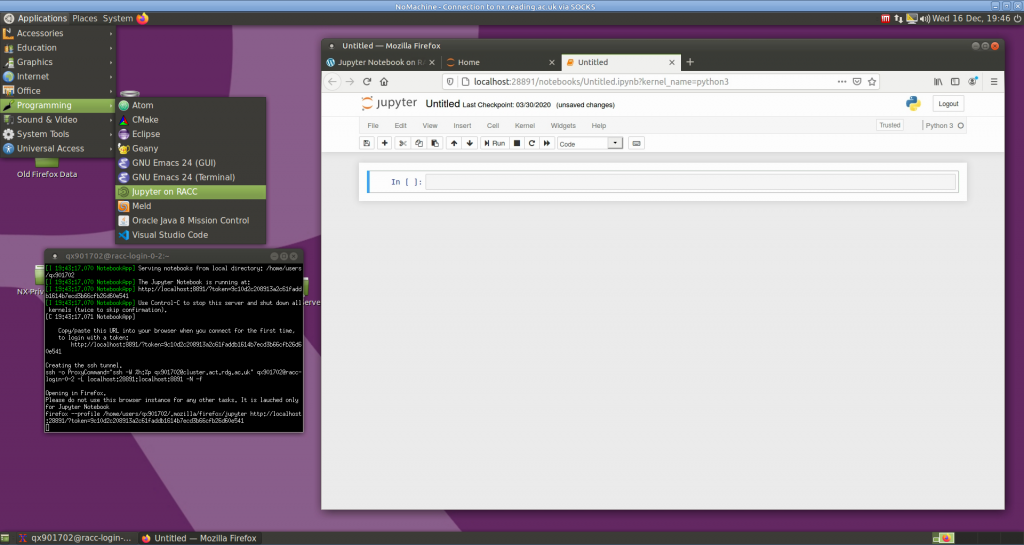

To this end, we have developed a system, R package, and R Shiny application called adapr (Accountable Data Analysis Process in R) that is built on the principle of accountable units. This implies a need for computing systems and environments that can efficiently satisfy reproducibility and accountability standards. Efficiently producing transparent analyses may be difficult for beginners or tedious for the experienced.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed